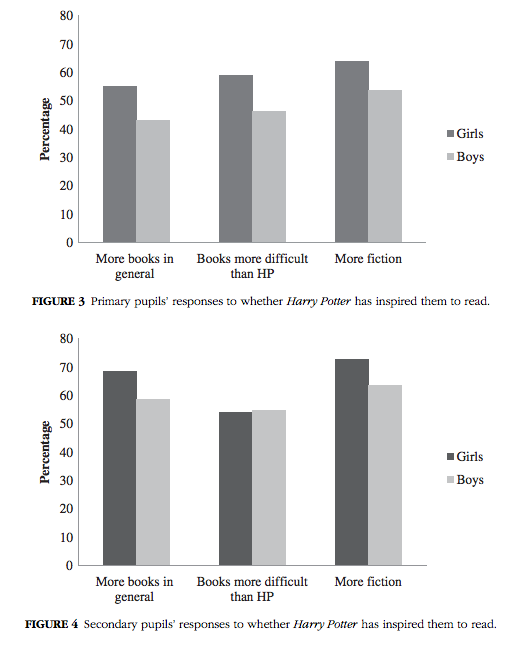

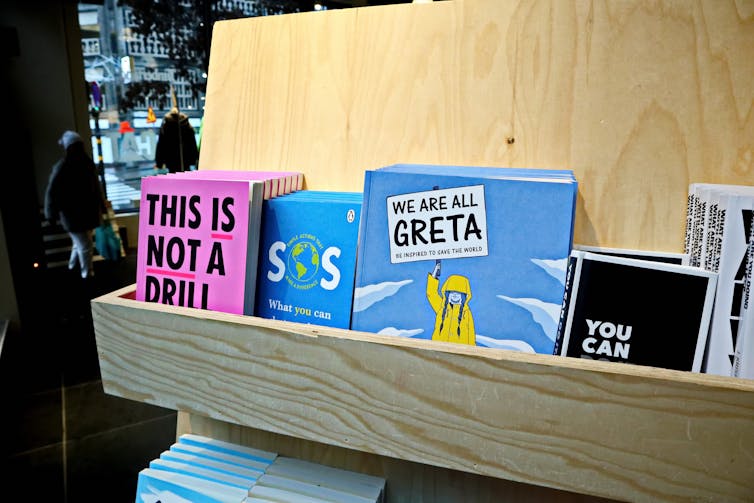

It’s that time of the year again. Brochures and emails spruik a bumper crop of new books about the climate crisis.

Goodreads

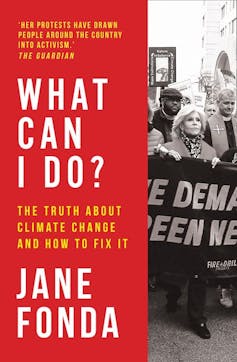

This time there are some really big names: How to Avoid a Climate Disaster by Bill Gates, Climate Crisis and the Global New Deal by Noam Chomsky and Robert Pollin, All We Can Save by Ayana Elizabeth Johnson and Katharine K. Wilkinson, What Can I Do? The Truth About Climate Change and How to Fix It by Jane Fonda, as well as new efforts from David Attenborough and Tim Flannery.

The incoming tide of new books makes me reflect and wonder whether writing still more books about climate change is a waste of precious time. When the UN is calling for governments to act to achieve carbon neutrality by 2050, are books just preaching to the converted? My answer is no, but that doesn’t mean publishing, buying or reading more books is the answer to our climate emergency right now.

Read more:

Friday essay: thinking like a planet – environmental crisis and the humanities

Decades of books

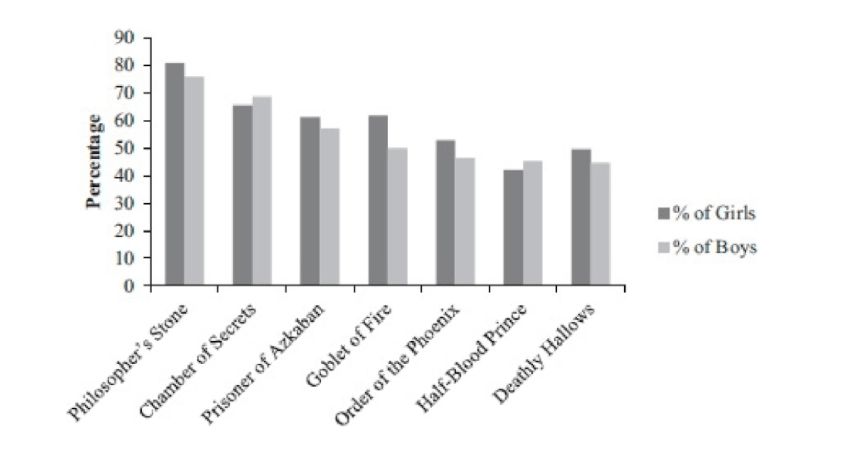

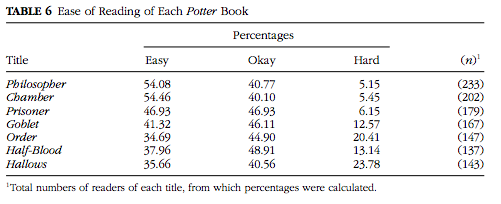

In April, on the 50th anniversary of Earth Day, the New York Times told readers this might be the year they finally read about climate change. But many already have.

The earliest titles date back to 1989: The Greenhouse Effect, Living in a Warmer Australia by Ann Henderson-Sellers and Russell Blong; my own contribution, Living in the Greenhouse, and the first book aimed at the US public, Bill McKibben’s The End of Nature.

Goodreads

The science was still developing then. We knew human activity was increasing the atmospheric concentration of greenhouse gases like carbon dioxide and methane. Measurable changes to the climate were also clear: more very hot days, fewer very cold nights, changes to rainfall patterns.

The 1985 Villach conference had culminated in an agreed statement warning there could be a link, but cautious scientists were saying more research was needed before we could be confident the changes had a human cause. There were credible alternative theories: the energy from the Sun could be changing, there could be changes in the Earth’s orbit, there might be natural factors we had not recognised.

By the mid-1990s, the debate was essentially over in the scientific community. Today there is barely a handful of credible climate scientists who don’t accept the evidence that human activity has caused the changes we are seeing. The agreed statements by the Intergovernmental Panel on Climate Change, the IPCC, led to the Kyoto Protocol being adopted in 1997.

And so — as the urgency being felt by the scientists increased — more books were published.

Former US vice president and 2007 Nobel Prize winner Al Gore’s book Our Choice: A Plan to Solve the Climate Crisis was first published in 2008 and has since been issued in 20 editions. There have been more than enough books to furnish a list of the top 100 bestselling titles on the topic, recommended by the likes of Elon Musk and esteemed climate scientists and commentators. The ones I have acquired fill an entire bookcase shelf — dozens of titles describing the problem, making dire predictions, calling for action.

Becca Tapert/Unsplash, CC BY

Read more:

‘The Earth was dying. Killed by the pursuit of money’ — rereading Ben Elton’s Stark as prophecy

Deeds not words

Does the new batch of books risk spreading more despair? If the previous books didn’t change our climate trajectory then what is the point in making readers feel the cause is hopeless and a bleak future is inevitable?

Goodreads

No. Writing more books isn’t a waste of time, but they also shouldn’t be a high priority at the moment. The point of writing a book is to summarise what we know about the problem and identify credible ways forward.

Those were my goals when I wrote Living in the Greenhouse in 1989 and Living in the Hothouse in 2005. The main purpose of the first book was to draw attention to a problem that was largely unrecognised, trying to inform and persuade readers that we needed to take action. By the release of the second book, the aim was to counter the tsunami of misinformation unleashed by the fossil fuel industry, conservative institutions and the Murdoch press. Rupert Murdoch spoke at News Corp’s AGM this week, maintaining: “We do not deny climate change, we are not deniers”.

But there are two reasons why I’m not working on a third book right now.

The first is time. If I started writing today, it would be late next year before the book would be in the shops. We can’t afford another year of inaction. More importantly, the inaction of our national government is not a result of a lack of knowledge.

On November 9, United Nations chief António Guterres said the world was still falling well short of the leadership required to achieve net-zero carbon emissions by 2050:

Our goal is to limit temperature rise to 1.5 degrees Celsius above pre-industrial levels. Today, we are still headed towards three degrees at least.

Some believe the inaction is explained by the corruption of our politics by fossil fuel industry donations. Others see is a fundamental conflict between the concerted action needed and the dominant ideologies of governing parties. Making decision-makers better informed about the science won’t solve either of these problems.

They might be solved, however, by the evidence that a growing majority of voters want to see action to slow climate change.

And the COVID-19 pandemic has focused, rather than distracted, the community on the risks of climate change. A recent survey by the Boston Consulting Group of 3,000 people across eight countries found about 70% of respondents are now more aware of the risks of climate change than they were before the pandemic. Three-quarters say slowing climate change is as important as protecting the community from COVID-19.

The growing awareness and sense of urgency are backed by another recent study looking at internet search behaviour across 20 European countries. Researchers found signs of growing support for a post-COVID recovery program that emphasises sustainability.

Shutterstock

Read more:

Why it doesn’t make economic sense to ignore climate change in our recovery from the pandemic

Change is happening, more is needed

Still, preaching to the converted is not necessarily a bad thing. They might need to be reminded why they were persuaded that action is needed, or need help countering the half-truths and barefaced lies being peddled in the public debate. Books can fulfil that mission. So can speaking to community groups, which I do regularly.

I tell audiences the urgent priority now is to turn into action the knowledge we have about the accelerating impacts of climate change and economically viable responses. Our states and territories now have the goal of zero-carbon by 2050, so I am giving presentations spelling out how this can be achieved. We urgently need the Commonwealth government to catch up to the community.

Unsplash/Markus Spiske, CC BY

Change is happening rapidly. More than 2 million Australian households now have solar panels. Solar and wind provided more than half of the electricity used by South Australia last year and that state achieved a world-first on the morning of October 11: for a brief period, its entire electricity demand was met by solar panels.

The urgent task is not to publish more books on the crisis, but to change the political discourse and force our national government to play a positive role.![]()

Ian Lowe, Emeritus Professor, School of Science, Griffith University

This article is republished from The Conversation under a Creative Commons license. Read the original article.

My Comment: I think an important point to be made about books on a topic is about influencing the zeitgeist and creating the groundswell for change. While books are only a small part of that, they do tend to lend credibility to any argument and push for change (hence why there is such a large amount of science denial, political revisionism, and blatant propaganda books published by various think tanks, pundits, and reactionaries trying to legitimise nonsense).

But we also have to acknowledge that at some point books are less about communicating ideas and influencing the zeitgeist and more about grift. There is money to be made by writing books. Publishers certainly make money with those books (and by publishing contrarian books… you know, for balance… and cash). And no small part of this grift is selling those books to well meaning people who will feel like reading the books counts as doing something about the issues raised.

So we have to remember, both as readers and writers, that the knowledge step of the book is only as valuable and meaningful as what we do with that knowledge.