From Hard Drive: Stephen King helps George R. R. Martin finish the A Song of Ice and Fire series.

Category: Fun Articles

My own take on life expressed with as much irreverance as possible.

I love honey.

Actually, that’s a lie, I’m ambivalent toward honey, I could take it or leave it.

Anyway, bees: kinda important. But not as important as recent internet and media talk would have us believe.

Here’s the problem summed up by the Penn State entomology group (dated August 2013):

At an international pollinator conference held at Penn State last week, the general consensus was that Colony Collapse Disorder (CCD) of honey bees is caused by multiple factors including: a) viruses and diseases; b) two species of mites; c) poor nutrition caused by foraging in sugar poor crops like cucurbits; d) the stress of interstate travel and e) pesticide exposure. Despite this, however, most of the research presented at the conference concentrated on pesticide exposure with a general call for banning a group of insecticides known as the neonicotinoids. This of course seems to be an easy fix to a complex problem that is still not completely understood, but of course is popular with the public and many ecologists that have never worked with pesticides or IPM. This stance does not take into account the reason these products were developed in the first place which was to replace human toxic OP pesticides and replace them with something safer as mandated by the Food Quality Protection Act. Neonicotinoid insecticides have also proven to be safer to most beneficial insects other than bees and promote the biological control of pests such as San Jose Scale, Woolly Apple Aphid, European Red Mite, leafminers, and leafhoppers to name a few. A general ban of neonicotinoid insecticides would cause a reversion back to OP, carbamate and pyrethroid insecticides which would totally destroy current IPM programs and cause growers an additional $50 to $100+ per acre in secondary pest sprays.

But here’s the other point that a lot of scares about pesticides miss:

Moreover, the authors do not account for the fact the France still observes CCD each year, even though they banned neonicotinoids 5 years ago. Nor do they note that beekeepers in Canada and Australia and parts of Europe use neonicotinoids, but do not observe CCD. Finally, they do not note that CCD has been taking place regularly for hundreds of years. We reviewed this in an article last year.

Update and aside:

There’s an important point in this piece that wasn’t covered. The discussion here is completely focused on European Honey Bees that are used in agriculture. They’re domesticated bees. We have plenty. But native bees are suffering the same issues that all animals and plants are faced with: a severe case of humans.

This explainer video from Vox is very good at covering that part of the issue.

Back to the article on honey bees.

Crops aren’t really affected: http://www.rba.gov.au/publications/bulletin/2011/jun/images/graph-0611-3-02.gif

Beehives aren’t exactly going extinct either: http://faostat3.fao.org/faostat-gateway/go/to/search/bee/E http://www.daff.gov.au/__data/assets/image/0011/1942481/graph1.gif

About 30% of our food, mainly fruit and nuts is pollinated by bees, but almonds are the only common crop that relies almost exclusively on bees. Of course not that it’s at all plausible that we will lose bees, as I hope I’ve already demonstrated.The headline of that article; “Scientists discover what’s killing the bees and it’s worse than you thought” is not supported by the text at all.

CCD or events that meet the description have been happening since at least the 19th century, but it’s entirely possible that there are modern reasons for it occurring now, and I’d say that seems especially likely on account of the very stark geography involved – where places like the USA and some European countries are badly hit, while other regions are completely unaffected.

The explanation that’s been favoured by actual scientists for a long time is that it’s a consequence of many factors. People, dare I say with confirming ideologies, are quick to point the finger at various “chemicals” but we know that it’s not just those chemicals, because other places that use them have no CCD.

But I go back to my earlier point, which is that it’s important to remember that this is no threat to the existence of bees, or plants that rely on bees. It’s very easy to breed bees and create new colonies. We could easily make the global bee population 100 times larger than it is now within this year if we really wanted to. CCD increases the costs to bee keepers, increases the costs of honey, and very marginally increases the costs of some fruits and nuts, within particular regions. It doesn’t threaten food security and it doesn’t threaten the existence of bees.

The European honey bee contributes directly to the Australian economy through the honey industry and to a lesser extent the packaged bee, bees’ wax and propolis sectors. Honey bees also contribute to the productivity of many horticultural crops, by providing essential pollination services that improve crop yield and quality. The Australian honey and bee products industry is valued at approximately $90 million per year.

It is estimated that bees contribute directly to between $100 million and $1.7 billion of agricultural production, mostly from unpaid sources such as feral bee colonies, but also from a small paid pollination industry of about $3.3 million, per year.1

This estimate refers to 35 of the most responsive crops to honeybee pollination. If all agriculture is included the estimates have run as high as $4-$6 billion2.

The industry is composed of about 10,000 registered beekeepers. Around 1,700 of these are considered to be commercial apiarists, each with more than 50 hives, and there are thousands of part-time and hobbyist apiarists, with total honey production around 16,000 tonnes of honey each year. http://www.daff.gov.au/animal-plant-health/pests-diseases-weeds/bee

A lot of people like to pretend that there is also a link between GMOs and CCD. This is utter nonsense. Firstly it is a poisoning the well logical fallacy, secondly there is nothing wrong with GMOs (check this list of +600 safety studies, and this list of articles on how good GMOs are, and this series on the Food Wars), and thirdly there is no actual link between GMOs and the supposed chemicals pressuring bee populations. This last point is very important, as it shows a confounding of issues, either deliberately or accidentally, that actually shows a lack of reading/understanding of the science of both GMO and CCD.

http://io9.com/ask-an-entomologist-anything-you-want-about-the-disappe-1616898038

Anyway, the real cause of CCD is the South Carolina divorce rate: http://tylervigen.com/view_correlation?id=558

http://www.examiner.com/article/bees-are-found-to-die-from-insecticide-insignificant-new-paper

More on GMO: Scientific American come out in favour of GMO:

http://www.scientificamerican.com/article/labels-for-gmo-foods-are-a-bad-idea/

http://blogs.scientificamerican.com/the-curious-wavefunction/2013/09/06/scientific-american-comes-out-in-favor-of-gmos/

http://beta.cosmosmagazine.com/society/how-we-perceive-risk-gmos

http://beta.cosmosmagazine.com/society/seeds-deception

http://beta.cosmosmagazine.com/society/environmentalists%E2%80%99-double-standards

Let’s dive into the book and movie that made Sean Connery give up acting and Alan Moore give Hollywood the finger.

This is one of those rare instances where I can say I didn’t like the book or the movie.

Back when I was graduating from junior to adult fiction, I went through a phase of reading all of the classic adventure novels. Everything from Tom Sawyer to Dracula. As such, I was familiar with every character Alan Moore put into his comic and none of them sat well with me. They were all slightly facile and nastier versions of the characters and stories I’d appreciated – love is far too strong a word.

When it came to the movie I was blown away by how terribly hamfisted it all was. Nothing in the movie really worked, despite there clearly being some talent involved.

For me, the worst part of the movie was Dorian Grey. I’d actually only gotten to that novel shortly before The League of Extraordinary Gentlemen movie came out so the character was fresh in my mind. To say that the character portrayed and the one from the novel were nothing alike is an understatement. Even the comic version is taking only the cliff notes version of the character.

It makes you wonder why either book or movie versions decided to use these public domain characters rather than make their own?

Oh look, Moore has commented on that, saying:

“The planet of the imagination is as old as we are. It has been humanity’s constant companion with all of its fictional locations, like Mount Olympus and the gods, and since we first came down from the trees, basically. It seems very important, otherwise, we wouldn’t have it.“

And:

“…it could be said that the theme of using popular fictional characters to comment on cultural and political mores has been carried over to “The Black Dossier” and the next volume of “The League of Extraordinary Gentlemen.” Source.

Or in other words, he thought it would be a cool narrative technique that might attract some readers. Not sure what the movie makers were thinking other than “franchise, franchise, franchise” while dancing in a conga line.

If this post has a point, I’m not sure what it is, but rest assured that I can’t find a word to rhyme with is.

I know that Dr Seuss is regarded as something of a big deal, particularly in the USA, but I was never really taken in by his books. Aside from Green Eggs and Ham, none of them has really stuck with me as stories.

And then we have the adaptations, the above-mentioned How the Grinch Stole Christmas and The Cat in the Hat. Both of these films came at a time when I’d had enough of Jim Carey and Mike Meyers. It’s really hard to enjoy a film that feels like more of that actor’s shtick rather than bringing a character to life.

So why am I discussing this Lost in Adaptation episode then?

Look, it was an interesting video, okay! I can find insights into artistic endeavour interesting without having to find that art to my taste!

Let’s have a look at one of the adaptations of Matilda by Roald Dahl in this What’s the Difference?

Recently our youngest has been on something of a Roald Dahl and Dick King-Smith read-a-thon. She is very much looking forward to the new Matilda film coming out soon and very much enjoyed the book.

Part of the reason for her enjoyment was that, unlike The Water Horse by Dick King-Smith, the movie protagonist is a girl just like in the book.

“Why would they make the girl into a boy for the film?”

Yes, Hollywood, why indeed.

I can vaguely remember watching the 90s film Matilda and enjoying it. Our youngest loved it. And we’ll inevitably sit down as a family to watch the new version. And this is in no small part due to the lack of kids books and adaptations featuring a female protagonist. You have to make the most of the handful of female-lead books and movies aimed at the middle-grade audience (YA is better served but comes with slightly more stabbings and blood-drinking than we’re comfortable exposing a kid to).

As with many of Dahl’s children’s books, the treatment of kids comes from a different era. Matilda was published in 1988, yet much of the way schools were run had already begun to change by then. In many ways, the mistreatment of students by teachers would probably feel more familiar to my parent’s generation than it does to me, and feels odd to our kids. Yet it still manages to be entertaining to kids, if our children are any barometer of what kids these days like.

Update: the new adaptation was good. Our youngest has already watched it twice.

If you like spies, then this instalment of Lost in Adaptation will be for you.

Many many years ago I decided I loved spy novels and read the Game, Set, Match series by Len Deighton. Not satisfied with books that mostly went over my head, I was recommended some John Le Carre. Again, I feel like the much younger me got lost in the ins and out of the spy world of Le Carre’s stories.

But then two things happened. The first was they made a pretty decent film adaptation of Tinker Taylor Soldier Spy. Then they cast Tom Hiddleston in a star-studded series adaptation of The Night Manager. So obviously, I was ready for my Le Carre.

I think the TV series was okay. The acting was just terrific, particularly from a relative Aussie newcomer in Elizabeth Debicki, but a lot of the scenes and details felt contrived. This really undermined any tension for me.

For example, the main antagonist played by Hugh Laurie is continually suspicious of everyone around him, but he is just a little bit too ready to accept Tom Hiddleston’s protagonist into the fold. “Here you go, you’re now in charge of one of my shell companies!” This would have been better if it was also great blackmail and/or worked as some sort of leverage against a potential spy (or maybe it was and I just forget that detail).

At some stage, the more mature and debonair me will revisit some of the books and authors I read when I was probably too young to appreciate them. Le Carre and Deighton are on that list.

I will resist the urge to use Burgess’ slang in this entire What’s the Difference with A Clockwork Orange.

Despite having previously covered A Clockwork Orange, Dominic’s video raised some points I didn’t discuss.

The mention of the missing chapter in the US editions of the book reminded me of the far more acceptable British version I had read. Because it has been quite some time since I read the book, I had forgotten entirely that Burgess had ultimately said “people grow up” or can actually change. Leaving this out of the US version and thus the film is both bad form and entirely American.

Given that most of the novel is essentially a drawn-out complaint about kids these days, it’s kinda important to acknowledge that ultimate point by Burgess. But in the land of gun-toting ‘Mericans, it makes sense they’d prefer the ending that justifies them standing on their porch with a shotgun grunting “get off my lawn.”

The other thing I was reminded of was the lexicon glossary. Burgess included a section (at the end? Could have been at the beginning) that roughly translated the slang into something approaching English. I can still remember continuously flipping back and forth as I read, deciphering as I went. It wasn’t a long novel, but I do remember doing this for the whole book.

I wonder if a more mature me would have more or less trouble with this slang aspect? I do know the more mature me would certainly have less patience.

The spice must something something.

While I don’t want to make a habit of doing multiple posts about books that get multiple movie adaptations, I’m doing it for Dune. Previously I discussed the very 80s adaptation of Dune. And now I’ve finally gotten around to watching the Dennis Villeneuve version.

It was fine.

I’ve watched a few sci-fi adaptations lately that spent a lot of time screaming “THIS IS SCI-FI” at the audience *cough Foundation cough*. So I was happy to see something so obviously sci-fi that didn’t do that. I also really like the world-building, which was mostly just long shots of locations. Made everything feel big.

But the new Dune kinda felt like an overly long and tension/stakes free movie. The soundtrack was bland and built zero themes to call back and emphasise key moments. Given the runtime and world-building, there still managed to be gaping holes in the story and consistency. I liked what they tried to do with the timeline visions, but it was a little confusing and could have been done better. And Jason Momoa managed to stand out in his role despite showing up as Jason Momoa.

To give an example of the consistency issue, I’ll mention the final scene (spoilers). Paul is shown to be capable with a knife early on but doesn’t show himself to be a warrior. Meanwhile, we get a heap of rhetoric about how tough and awesome the Fremen are, even a scene where they ambush and wipe out a unit of elite Sardaukar. Then in the final scene, Paul just casually bests a high-ranking Fremen (/spoilers). This all felt really inconsistent and in desperate need of establishment.

I will say that many of the issues with the movie are also issues with the source material, including the one I highlighted above. But for the most part, this was a pretty good adaptation. I’m just not sure it was a great film.

My review of the Dune novel here, and of the series here.

This month in What’s the Difference? let’s discuss a classic five-part novel trilogy and its movie adaptation.

I love the Hitchhiker’s books. In the above videos, Dominic Noble covers a lot of what was changed from the book(s) to the movie and I agree with his points about how they managed to ruin the adaptation. But unlike Dominic, I don’t have any particularly strong feelings about the movie. I think this comes down to how I largely dismissed the film as either:

- A very American homage to the Hitchhiker’s books, or;

- A very soulless adaptation by the Hollywood machine.

Take, for example, the point about Arthur Dent being portrayed as a snivelling loser with all the cringe humour to support that portrayal. I really don’t enjoy cringe humour and laughing at “losers”. Having them be the main character is an even worse idea. But I can see how an American or Hollywood adaptation would take the idea of an incompetent and insecure (i.e. British) character and make them into Loser McCringefest.

The stamp of this failure to understand what the jokes actually were is all over the movie. And it seems to be a common problem when American studios take British material and try to adapt it. There are numerous TV shows that American audiences have loved, which a production studio takes as the impetus to make a version without subtitles*, and then somehow they make a pilot or show that just mangles the entire point. American audiences really deserve better.

There’s actually a good documentary on this issue done as part of the Red Dwarf DVD extras. Essentially, the production studios don’t really understand what is funny about the source material and thus what any changes they make will do to the adaptation.

So I don’t hate the movie adaptation of one of my favourite books. Because I don’t regard it as a real adaptation.

* Oh, you think I jest? I’m afraid not. When I visited the US of A I was surprised to see subtitles being used when people of non-North American origin spoke English. I mean, Scottish people having subtitles I can kinda understand, but Irish people? At least it was good to bust the myth that Americans can’t watch stuff with subtitles…

Recently on the den of inequity and monetised dumpster fires, I posted a tweet.

This idea of how the “big brains” in Silicon Valley seem to miss the point of most speculative fiction has been in my thoughts lately. I’ve lost count of the number of articles I’ve read that have sung the praises of a new tech idea that was clearly ripped straight out of a dystopic novel.

Surely it isn’t just me and every other book nerd who understands that your favourite sci-fi novel was meant to be a warning, not a goal, right?

Well, this video from Wisecrack certainly appears to be on my side.

I think it is clear why tech-bros get sci-fi (and other spec-fic) wrong. The shallow, selfish and egotistical nature of being a Silicon Valley wonk precludes you from fully understanding subtle messages in fiction. You know, subtle messages like AIs will destroy the planet, anarcho-capitalism will destroy the planet, rich/greedy people will destroy the planet, pollution will destroy the planet, etc.

Take Musk’s Neuralink. When it’s not being inhumanely tested on monkeys, there is a lot of buzz around what it could do. Like brain uploading to make you immortal. Like in that sci-fi novel where people became immortal thanks to brain uploading. Which was a novel about how brain uploading was really really bad.

But is that the message that someone like Musk would take away from Altered Carbon? Would he look at that sci-fi dystopia and think “wow, bad, let’s not make brain uploading a thing” or does he look at it and think “wow, that rich guy had a sky palace and got to be super-duper immortal rich, let’s make that brain uploading a thing”? Hint, it’s the last one. Because that novel isn’t a dystopia for someone like Musk, it’s a utopia.

This is ultimately the point of speculative fiction. It makes comments upon our current society through fictional worlds, to show us the follies of our ways. The trick is to make sure WE heed the message and stop the rich and powerful from steering us (further) into dystopia.

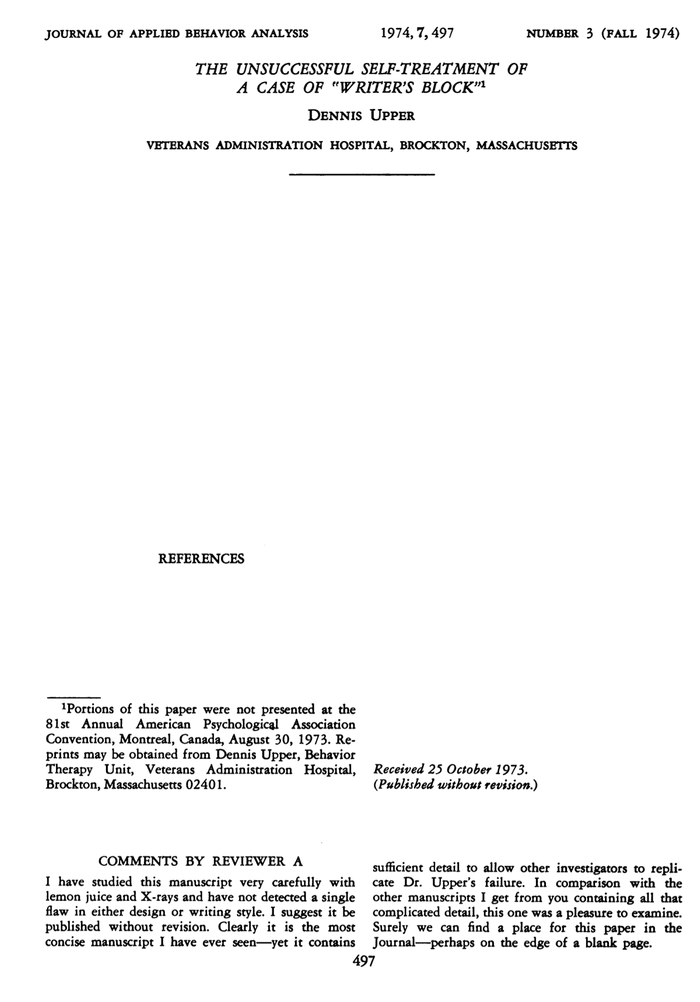

Have you ever suffered from writer’s block? It is a crippling and debilitating affliction that rivels writer’s cramp for its perniciousness.

Researchers, knowing the harm that writer’s block can cause, have been undertaking decades of research into potential treatments. The seminal work was written in 1974 by Dennis Upper.

As you can see, it was a very concise paper that encapsulated the issue perfectly. Upper’s research was semi-influential and spawned several other studies on the issue. This culminated in a meta-analysis in 2014.

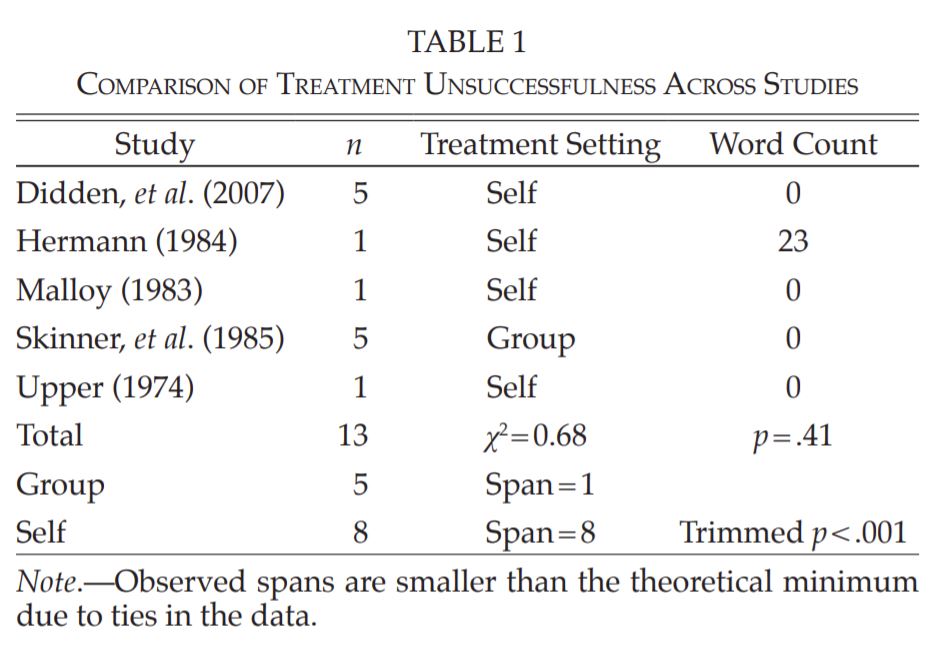

The meta-analysis covered all the relevant studies and data from 40 years of research. The study makes it clear that most treatments are unsuccessful at addressing writer’s block. Table 1, below, outlines the body of research and word counts under each treatment setting.

While these studies and the meta-analysis suggest that there is no hope for those suffering from this disease, we shouldn’t be shuffling those afflicted into a career in programming bitcoin farms. They need to be treated with dignity and respect, not cast aside into worthless activities that destroy the planet.

So, before it is too late for writer’s block sufferers, try to talk to them about how many words Stephen King writes per day. Mention that Enid Blyton wrote over 800 novels in her career, including a period of time where it wasn’t uncommon for her to write a book every two to three days. Or that Ryoki Inoue published 1075 novels and that he writes night and day without any breaks until he finishes a book.

This month’s It’d Lit! is all about stealing your story ideas from others.

I’ve previously discussed how few plots there are and how certain archetypes trace their origins back as far as we have records for. One example of this is the wandering hero, or knight errant, arriving in town to take on the bad guys before moving off for the next adventure. This is a popular genre – think Jack Reacher – and has its origins at least as far back as the Greek myths and East Asian folklore.

So is this recycling or is it about the formula storytellers use as the basic backbone to hang their narrative off of?

I’d argue the latter. This is especially true of the examples of “inspired by” or “fan-fic” from the video (and elsewhere). The storyteller will have been thinking about that awesome story and what they’d have liked to do differently, or set it in a different location.

For example, the best Die Hard sequels haven’t been in the Die Hard franchise. Instead, they have been Die Hard On a Bus, or Die Hard On a Plane, or Die Hard In the Whitehouse. The fact you probably know exactly which movies those refer to shows how the basic premise being adapted doesn’t cut down on the creativity. Well, mostly.

And even if the recycling isn’t quite as overt as Die Hard On a Boat, all stories are inspired by or are a combination of the stories that came before. The storyteller has to start somewhere. Preferably not with Die Hard On a Train, the sequel to Die Hard On a Boat.

From James Joyce’s Ulysses to Bridget Jones’s Diary, you’ve probably read a book that was just a modern retelling of a well-established story. Which is to say nothing of other forms of media and their own obsessions with retellings.

And despite what your Writing 101 instincts might tell you, this is neither bad nor lazy writing—or even a new concept. Because let’s be honest: sometimes a story is just so dang good, it bears repeating. Sometimes more than once. Sometimes multiple times. I’m looking at you, Jane Austen.

Hosted by Lindsay Ellis and Princess Weekes, It’s Lit! is a show about our favorite books, genres and why we love to read. It’s Lit has been made possible in part by the National Endowment for the Humanities: Exploring the human endeavor.

Let’s take a look at the works of HG Wells with this month’s It’s Lit!

A few years ago I read and re-read several of HG Wells’ novels. The thing that I was struck by was just how dry and dull the stories were.

Don’t get me wrong, the concepts, characters, plots, etc, are all good. The problem was how a story would be bookended or be a recounted narrative or some other technique that removed just about any tension or engagement. It was like setting fire to a classic sports car to get insurance money to buy a Toyota Corolla.

But it was a different time. Wells wrote for a different audience. We can forgive him.

Or can we?

One thing not discussed in the video is Wells’ long history of plagiarism. Several of his “big ideas” were lifted straight from other lesser-known authors. The novel that set him up as a professional writer was ripped directly from the unknown Florence Deeks. Not satisfied with having gotten away with the theft, Well’s decided to ruin Deeks.

So in terms of things we didn’t know about Well’s, I think him going against his own socialist values by plagiarising is near the top of the list.

H.G. Wells is a name that is synonymous with the creation of what we now know as science fiction. He effectively invented the subgenre of alien invasion, he coined now-ubiquitous terms “time machine,” “heat ray” and even disputably “the new world order.” But what most people don’t know about Wells is that although today he is predominantly known for his science fiction, his career as an SF author was pretty short.

Wells wrote dozens of novels, most of which weren’t science fiction. But despite the relatively few science fiction works he wrote in comparison to his vast oeuvre, Wells was an influential thinker – not just for the genre of science fiction, but for science’s relationship to the culture at large.

Hosted by Lindsay Ellis and Princess Weekes, It’s Lit! is a show about our favorite books, genres, and why we love to read. It’s Lit has been made possible in part by the National Endowment for the Humanities: Exploring the human endeavor.

With the new Dune movie coming soon, it’s time to look at the 80s adaptation’s differences from the classic novel.

On the train to work the other day I noticed that in my carriage half the people reading books were reading Dune – mostly the first novel but some were reading other parts of the series. It was somewhat surprising.

Then about a week later I was in a book store and saw that they had an entire shelf of Dune novels and a new edition of the first novel in piles at the front of the store. It was then that I remembered Denis Villeneuve’s adaptation was coming out soon.

Now of course, I’m so ahead of the game that I read Dune *checks notes* 3 years ago. I even discussed the Dune series’ importance just last year.

For me the main thing about the David Lynch adaptation was that it needed to be a political thriller. Instead it was a drama.

Not that the film isn’t without tension and thrills, like the running across the desert without the thumpers and trying to avoid the worms. But the book needed to be stripped back to that political thriller plot to hang the conflict and civil war on.

I’m not sure what Villeneuve plans to do, but he is a very accomplished storyteller. It will be interesting to see if he succeeds where Lynch managed to find himself crying in the corner he’d painted himself into.

Dune is coming back to the big screen while Denis Villenueve and Timothee Chalamet crashing a sandworm into your HBO Max as well, so it’s time to take a look back at the adaptation from David Lynch back in 1984. Based on the Frank Herbert epic, Dune is considered to be one of the greatest science fiction novels of all time. So how did an indie auteur make a big budget Hollywood adaptation out of a dense fantasy epic? It’s time to remember, fear is the mind killer as we ask, What’s the Difference?

Starring Kyle MacLachlan as Paul Atreides, Sting as the space-underwear clad Feyd-Rautha and the soon-to-be Captain Picard Patrick Stewart, Lynch brought together a fascinating group of 80s character actors like Dean Stockwell, Linda Hunt and Jurgen Prochnow to fill out the cast. A critical and commercial failure when it came out, and in light of prior failed attempts to adapt the sci fi fantasy all-timer, which included Jodorowsky’s Dune, the book was long thought to be unfilmable. With the Atreides and Harkonnen rekindling their big screen rivalry in the form of Oscar Isaac, Josh Brolin, Zendaya and a cast as stellar as the one Lynch assembled, we’ll see how 2021’s adaptation fares.

Sci-Phi: Science Fiction as Philosophy by David K. Johnson

Sci-Phi: Science Fiction as Philosophy by David K. Johnson

My rating: 5 of 5 stars

Science Fiction: more than just pew-pew noises.

Science Fiction as Philosophy is a Great Courses series in which each lecture uses an example sci-fi movie or show (plus a few supporting examples) to discuss a philosophical concept. This illustrates both the depth of sci-fi and creates a starting point to draw various philosophical ideas together. David K Johnson presents this broad-ranging series.

The audiobook/lecture series is much like the rest of the Great Courses and includes course notes. The notes book in this instance is presented as a lot of dot points – I don’t remember this being the case in other Great Courses. It was incredibly handy for doing the lateral reading.

This was a fantastic series. The lecturer was able to cover a lot of material in a concise and accessible manner. Johnson also managed to retain a sense of humour that was entertaining in what could have been dry and boring subject matter.

It was great to revisit so many of my favourite sci-fi movies and shows to discuss them with a philosophical eye. This was generally well done and interesting. The deeper insights were not necessarily surprising to sci-fi fans but I generally found a bit more depth to the material here than in the usual pop-philosophy discussions.

That said, there were times where the lectures felt like the cliff notes of philosophy, which isn’t that surprising for something covering a lot of ground. For some topics, I noticed that material was a shortened version of things like the Stanford Encyclopedia of Philosophy. So this could feel a bit light on if you are familiar with the philosophy being discussed.

Overall, I really enjoyed this Great Courses series and want to dive into some of the other series David K Johnson has made.

Comments while reading:

Lots of great material and subject matter. Highlighted a few of my old favourites, like The Thirteenth Floor.

I have so many issues with the Simulation Hypothesis and 20% chance figure. Personally, I think we should dismiss it in much the same way we dismiss the Devil’s Veil, Brain in a Vat, Matrix, and other similar ideas. Materialism is a much better explanation, as discussed in a previous lecture/chapter.

My main issue with the idea is that the probability matrix and reasoning are essentially Pascal’s Wager (which is predated by several other versions). The problem is that you can use this reasoning to justify just about anything. Replace belief that we’re living in a simulation with belief in magic or god or superman or evil superman or the free market. Nonsense can be granted a “logical” and “rational” foundation which could then be used to justify atrocities – e.g. you could justify killing people because it’s only a simulation.

Pascal’s wager: Believing in and searching for kryptonite — on the off chance that Superman exists and wants to kill you. https://rationalwiki.org/wiki/Pascal%…

The section on militarism vs pacifism vs just war is a little disappointing. It starts strong with the castigation of militarism. The pacifism is covered reasonably, the best bit being the dispelling of the idea of pacifism being about just rolling over to violence rather than finding non-violent ways to address violence/militarism. But then Johnson kinda falls prey of several ahistorical factors and militaristic ideas in being critical of pacifism. Which leads into just war as some sort of compromise between the two.

I disagree here. I’d argue that just war isn’t a middle ground but instead a justification for militarism through a pseudo-intellectual justification. Take any of the given requirements of just war and you won’t find a single war (or conflict) that meets the criteria. Even going historically (it’s meant to be used prior and during) you have to be pretty selective in your cherry-picking to get things to fit. E.g. Hitler and the Nazis were bad, so WW2 was all good… well, except the conditions for WW2 were sown at the close of WW1 and could have easily been avoided, the war supplies to Germany could have easily been closed (although that would have stopped the US companies making big $$ from the Nazis), and the Nazis party could have not been internationally endorsed. In other words, the only reason you can meet Just War is if you turn a blind eye for a couple of decades and wait for atrocities to start happening and use those as a post-hoc reason to go to war (they didn’t know about the atrocities until after going to war).

There’s nothing like being reminded of how terrible Robert Nozick’s philosophy was/is. “Rawls was wrong because people earn stuff, even when they cheated or got lucky, and most actually get lucky, BUT THEY EARNED IT DAMMIT!!”

I think Johnson is way off the mark on the luke-warmerism of Snowpiercer. I’m not sure if this is just a really bad take on his part or if he is unaware of the arguments around geoengineering solutions to climate change. Probably a bit of both. Point being, geoengineering is seen by its critics as offering similar unforeseen consequences as the burning of fossil fuels. This means Snowpiercer exists in a world where delay by the powerful required hubristic action that once again disproportionally impacted the poor. Maybe the problem is that Johnson was trying to discuss something fresher, since Snowpiercer has been written about quite a bit from the class struggle perspective, and was trying to fit within his lecture structure.

Sweep in Peace by Ilona Andrews

Sweep in Peace by Ilona Andrews

My rating: 5 of 5 stars

Gotta wonder if magic would also work to stop weapons manufacturers perpetuating war?

It’s been slow for the Gertrude Hunt Inn since Dina Demille stopped an intergalactic assassin in her neighbourhood. But on short notice, Dina is called upon to host trilateral peace talks for groups who would really like to kill everyone. From looking after one guest to hosting feasts for dozens, Dina is under the pump to not only look after everyone but see that the talks are a success. She’ll be ruined otherwise.

There are times when I really love my library. After finishing Clean Sweep, I put my name on the reserve list for Sweep in Peace. The queue was a month long, so I started another novel in the interim. Just as I was debating whether to persist with that far less entertaining novel, Sweep in Peace was available. I can only assume the quick turnaround of only a few days to be due to how fast a read this series is.

This was quite an ambitious novel. The premise of the conflict is not an easy one to navigate. I especially appreciated the peace negotiation as it is almost the polar opposite of what most novels would do with a war. Diplomacy? Surely we can just shoot the diplomat full of arrows and then commit a genocide?*

While successfully achieving this ambitious premise, Sweep in Peace still manages to retain its fast pace, humour, and charm. The emergent humour that naturally fits within the scenes is particularly good.

I’m already reading book three in the series, One Fell Sweep. That should tell you everything you need to know about how much I enjoyed Sweep in Peace.

* If you don’t get this reference to one of the least subtle comments on diplomacy and promotion of war being awesome, then I’m glad. Old Man’s War was bad on many levels.

Better than Life by Grant Naylor

Better than Life by Grant Naylor

My rating: 5 of 5 stars

The only time watching snooker isn’t boring is when you scale it up.

The crew of Red Dwarf are trapped in the most addictive game of all time: Better Than Life. Most people become trapped because they don’t even realise they are in the game, but Lister, Rimmer, Cat, and Kryten know it. They’ve even thought of leaving. Can they get out before Holly and the Toaster manage to crash into a black hole?

After reading Infinity Welcomes Careful Drivers (Red Dwarf #1), I couldn’t help but continue straight into Better Than Life. The former finished with the Red Dwarf crew stuck in BTL, which is something of a cliffhanger. BTL similarly finishes on a bit of a cliffhanger that appears to lead into Backwards (although, Last Human is also a direct sequel to this, because reasons*).

Much like the first novel, this fleshes out ideas and episodes from the first few seasons of Red Dwarf. While it has been quite a while since I watched the show, I think the books do more with the material and rely on less of the banter/insults for humour. And like the first novel, I was pleasantly reminded of just how funny these books (and the show) are.

I’m looking forward to reading Backwards and Last Human soon.

* The reason being that Rob Grant and Doug Naylor had two more books on their contract to deliver and they had decided to separate as a writing team. The exact reasons for the separation are unclear, even to the duo themselves it seems, and Doug Naylor has continued Red Dwarf without Grant.

With a new Bond movie set for theaters, it’s time to look back at the first James Bond adventure and ask, What’s the Difference?

I still haven’t picked up any of the Bond books. Previously I’ve mentioned having vague memories of reading a couple when I was younger. But honestly, they could have been Biggles books.

Side note: as a kid I always thought that Biggles and his friends were gay. I didn’t really know what that was exactly, but they were definitely it. Monty Python agreed. Pity it wasn’t championed a bit more.

Seeing the differences outlined between the Dr No book and film does highlight an issue with plot vs character adaptation. Especially for a series. Change one and you have to change the other.

Although, it would be interesting to see how a cardboard thin character could be slotted into any plot without change. Like say the majority of Jason Statham’s roles.

No Time to Die finds James Bond, Her Majesty’s most infamous double-oh, retired in Jamaica. But we’re going all the way back to the first time Sean Connery as 007 found his way to the Caribbean Island in 1962’s Doctor No. But while it was the first Bond adventure in the film franchise, it was the sixth book author Ian Fleming published. So how did the filmmakers set about adapting the middle of Bond’s novel career for the beginning of his film escapade? Dust off your license to kill because it’s time to ask, “Difference… What’s the Difference?”

Did you know that Quentin Tarantino had novelised his ninth film? Neither did I. Let’s take a look and What’s the Difference?

As a Tarantino fan since the early 90s – geez, that makes me sound even older than I am – I have to come clean on Once Upon A Time In Hollywood. I didn’t like it.

I’ll even go a step further and say that his previous film, Hateful Eight, wasn’t good either.

Unlike Hateful Eight, which had a decisive moment when the film fell apart (Tarantino’s voice over setting up the third act just ruined everything for me), Once Upon A Time In Hollywood was entirely pedestrian. It always felt like a film avoiding being anything other than a love letter to Hollywood films of the 60s.

In fairness to the movie, Tarantino was clearly trying to subvert many of the usual movie moments and be more about actors making great films. For example, the scene at the ranch was setup for a fight for Pitt’s character (Cliff Booth) and the Manson acolytes. Instead, Tarantino subverts that moment and there is no fight, allowing us plenty more time for DiCaprio’s character to learn about method acting from his child co-star.

That the novelisation is quite different from the film isn’t particularly surprising. It’s pretty difficult to make Brad Pitt into a thoroughly unlikable character in a movie. Something to do with charisma and production credits. But the book is unconstrained by actor charisma, which makes it a good opportunity to throw the character under the bus.

Regardless of Tarantino’s future literary aspirations, I hope his tenth/final film is able to cement his career as one of the greats.

Once Upon a Time in Hollywood: Who is Cliff Booth anyway?

Once Upon a Time in Hollywood is a celebrated installment in writer/director Quentin Tarantino’s oeuvre. So when he came out with a book adaptation of the story, we were first in line to read it. But was the book markedly different from the film, and do those differences mean something big? We think so and we’ll explain in this Book vs. Film on Once Upon a Time in Hollywood – The New Ending.

This month’s It’s Lit covers Amatory Fiction.

This is an interesting video for several reasons. I’m always amused when the topic of rethinking “great authors” comes up and people without pearls start clutching them.

The literary canon excluding certain types of authors and books shouldn’t be news to people. But there always seems to be plenty of reactionary debate making excuses for why, for example, Grapes of Wrath got published while Sanora Babb’s Whose Names Are Unknown (written the same year on the same topic, both using Babb’s notes) took 65 years to be released. Yeah, that was a thing.

I’ve covered this before when calls have been made to increase the diversity of the literary lists for students in the hopes that more diversity of texts will be taught. Getting people who don’t read much to acknowledge that “literary greats” are less about talent than luck (timing, contacts, $$, etc) is a hard task. Trying to get those same people to acknowledge that women, people of colour, and non-Americans might have written books throughout history is often a hurdle they are unwilling to even attempt jumping.

Which brings me around to one of my favourite topics here: snobbery and guilty pleasures. The It’s Lit video shows how snobbery essentially relegated an important part of literature to the unknown and unappreciated baskets of history. Combine that snobbery with a bit of the old bigotry of the pants and you will have people trying to ignore a segment of literature that broke boundaries (e.g. Behn wrote one of the earliest anti-slavery novels).

For more on Sanora Babb’s novel, it is worth watching this video:

The guy typically credited with inventing what we know as the modern novel was Miguel de Cervantes with his cumbersome 800+ page book, Don Quixote. But what if I told you that the real antecedent for the modern novel was created by… ladies.

Before the rise of what would become the modern novel, there was Amatory fiction. Amatory fiction was a genre of fiction that became popular in Britain in the late 17th century and early 18th century. As its name implies, amatory fiction is preoccupied with sexual love and romance. Most of its works were short stories, it was dominated by women, and women were the ones responsible for sharing and promoting their own work.

Hosted by Lindsay Ellis and Princess Weekes, It’s Lit! is a show about our favorite books, genres, and why we love to read. It’s Lit has been made possible in part by the National Endowment for the Humanities: Exploring the human endeavor.