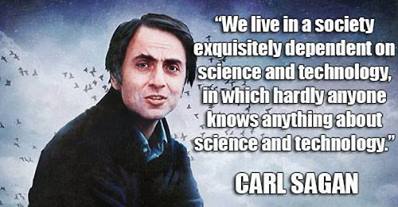

With the rebirth of Cosmos on TV, Neil DeGrasse Tyson and the team have brought science back into the mainstream. No longer is science confined to the latest puff piece on cancer research that is only in the media because a) cancer and b) the researchers are pressuring the funding bodies to give them money. The terms geek and nerd have stopped being quite the derogatory terms they once were. We even have science memes becoming as popular as Sean Bean “brace yourself” memes.

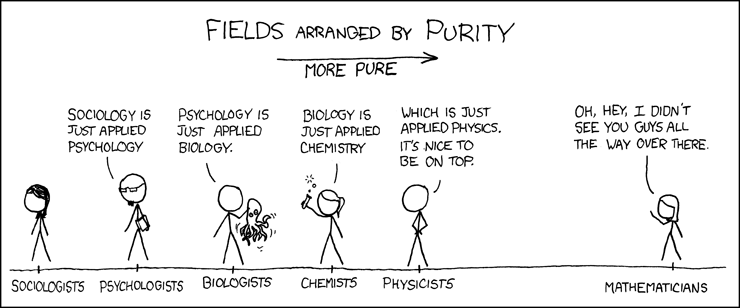

This attention has also cast a light on the scientific process itself with many non-scientists and scientists passing comment on the reliability of science. Nature has recently published several articles discussing the reliability of study’s findings. One article shows why the hard sciences laugh at the soft sciences, with the article talking about statistical errors. I mean, have these “scientists” never heard of selection and sample bias? Yes, there is a nerd pecking order, and it is maintained through pure snobbishness, complicated looking equations, and how clean the lab-coat remains.

As a science nerd, I feel the need to weigh in on this attack on science. So I’m going to tear apart, limb by limb, a heavy hitting article: Cracked.com’s 6 Shocking Studies That Prove Science Is Totally Broken.

To say that science is broken or somehow unreliable is nonsense. To say that peer review or statistical analysis is unreliable is also nonsense. There are exceptions to this: sometimes entire fields of study are utter crap, sometimes entire journals are just crap, sometimes scientists and reviewers suck at maths/stats. But in most instances these things are not-science, just stuff pretending to be science. Which is why I’m going to discuss this article.

A Shocking Amount of Medical Research Is Complete Bullshit

#6 – Kinda true. There are two problems here: media reporting of medical science and actual medical science. The biggest issue is the media reporting of medical science, hell, science in general. Just look at how the media have messed up the reporting of climate science for the past 40 years.

Of course, most of what is reported as medical studies are often preliminary studies. You know: “we’ve found a cure for cancer, in a petri dish, just need another 20 years of research and development, and a boatload of money, and we might have something worth getting excited about.” The other kind that gets attention isn’t proper medical studies but are spurious claims by someone trying to pedal a new supplement. So this issue is more about the media being scientifically illiterate than anything.

Another issue is the part of medical science that Ben Goldacre has addressed in his books Bad Science and Bad Pharma. Essentially you have a bias toward positive results being reported. This isn’t good enough. Ben goes into more detail on this topic and it is worth reading his books on this topic and the Nature articles I previously referred to.

Many Scientists Still Don’t Understand Math

#5 – Kinda true. Math is hard. It has all of those funny symbols and not nearly enough pie charts. Mmmm, pie! If a reviewer in the peer review process doesn’t understand maths, they will often reject papers, calling the results “blackbox“. Other times the reviewers will fail to pick up the mistakes made, usually because they aren’t getting paid and that funding application won’t write itself. And that’s just the reviewers. Many researchers don’t do proper trial design and often pass off analysis to specialists who have to try and make the data work despite massive failings. And the harsh reality is that experiments are always a compromise: there is no such thing as the perfect experiment.

Essentially, scientists are fallible human beings like everyone else. Which is why science itself is iterative and includes a methods section so that results are independently confirmed before being accepted.

And They Don’t Understand Statistics, Either

#4 – Kinda true, but misleading. How many people understand the difference between statistically significant and significance? Here’s a quick example:

This illustrates that when you test for something at the 95% confidence interval you still have a 1 in 20 chance of a false positive or natural variability arising in the test. Some “science” has been published that uses this false positive by doing a statistical fishing trip (e.g. anti-GM paper). But there is another aspect, if you get enough samples, and enough data, you can actually get a statistically significant result but not have a significant result. An example would be testing new fertiliser X and finding that there is a p-value of 0.05 (i.e. significant) that the grain yield is 50kg higher in a 3 tonne per hectare crop. Wow, statistically significant, but at 50kg/ha, who cares?!

But these results will be reported, published, and talked about. It is easy for people who haven’t read and understood the work to get over-excited by these results. It is also easy for researchers to get over excited too, they are only human. But this is why we have the methods and results sections in science papers so that calmer, more rational heads prevail. Usually after wine. Wine really helps.

Scientists Have Nearly Unlimited Room to Manipulate Data

#3 – True but misleading. Any scientist *could* make up anything that they wanted. They could generate a bunch of numbers to prove that, for an example of bullshit science, the world is only 6000 years old. But because scientists are a skeptical bunch, they’d want some confirming evidence. They’d want that iterative scientific process to come into play. And the bigger that claim, the more evidence they’d want. Hence why scientists generally ignore creationists, or just pat them on the head when they show up at events: aren’t they cute, they’re trying to science!

But there is a serious issue here. The Nature article I referred to was a social sciences study, a field that is rife with sampling and selection bias. Ever wonder why you hear “scientists say X is bad for you” then a year later it is, “scientists say X is good for you”? Well, that is because two groups were sampled and correlated for X, and as much as we’d like it, correlation doesn’t equal causation. I wish someone would tell the media this little fact, especially since organic food causes autism.

Other fields have other issues. Take a look at health and fitness studies and spot who the participants were: generally, they are university students who need the money to buy tinned beans and beer. Not the most representative group of people and often they are mates with one of the researchers, all 4 of them. Not enough participants and a biased sample: not the way to do science. The harder sciences are better, but that isn’t to say that there aren’t limitations. Again, *this is why we have the methods section so that we can figure out the limitations of the study.*

The Science Community Still Won’t Listen to Women – Update

#2 – When I first wrote this I disagreed, but now I agree, see video below. As someone with a penis, my mileage on this issue is far too limited. That is why it was only when a few prominent people spoke out about this issue that I realised science is no better than the rest of society. It hurts me to say that.

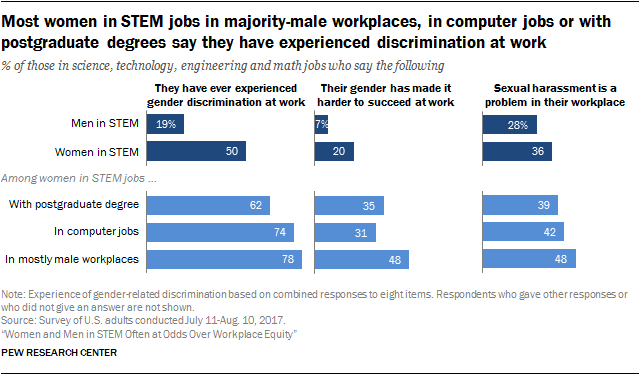

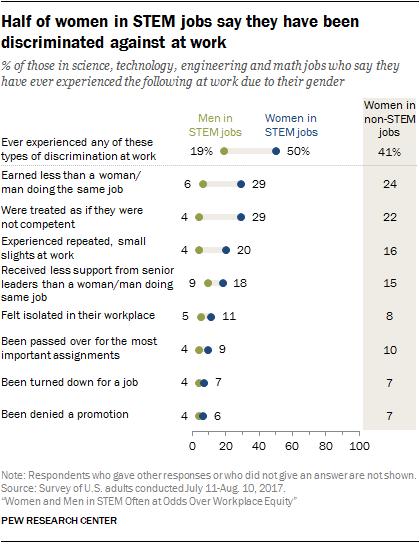

There is still a heavy bias toward men in senior positions at universities and research institutes, women get paid less, women are guessed to be less competent scientists, and apparently, it is okay to ogle female scientists’ boobs… Any of these sound familiar to the rest of society? This is gradually changing, but you have to remember what age those senior people are and what that generation required of women (quit when they got married, etc). That old guard may have influence but they’ll all be dead or retired soon where their influence will be confined to the letters to the editor in the newspaper. After seeing the video below, especially the way the question was asked, I think it is clear that the expectations for women create barriers into and through careers in science (the racism is similar and is one I see as a big issue). So it starts long before people get into science, then it continues through attrition.

Fast forward to 1:01:31 for the question and NDGT’s* answer (sorry, embed doesn’t allow time codes).

Recently there has been a spate of very public sexist science moments. Whether it be telling female scientists they should find a male co-author to improve their science, or Nobel Laureates who don’t want to be distracted by women in the lab, it is clear that women in science don’t get treated like scientists. Which is why I find the Twitter response to the Tim Hunt debacle, #distractinglysexy, to be exactly the sort of ridicule required. Recent events seem to imply at least repercussions are occurring now.

Scientists are meant to be thinkers, they are meant to be smart, they are meant to follow the evidence. They aren’t meant to behave like some cretin who hangs out on the men’s rights movement subreddit discussion. Speaking of which, watch science communicator Emily Graslie discuss the comments section of Youtube.

Here’s another from Thought Cafe and Dr. Renée Hložek.

Update: After the first photo of a black hole was published, women in STEM were back in the headlines, with people wanting to again marginalise women in STEM – not to mention how the media love to promote the “lone genius” when science is a team thing. Vox had a great article on it which included some great graphs from Pew Research.

It’s All About the Money

#1 – D’uh and misleading. Research costs money. *This is why we have the methods section, so that we can figure out the limitations of the study.* Money may bring in bias, but it doesn’t have to, nor does that bias have to be bad or wrong. Remember how I said above that science is an iterative process? Well, there is only so big a house of cards that can be built under a pile of bullshit before it falls down in a stinky mess. Money might fool a few people for a while (e.g. climate change denial) but science will ultimately win.

Ultimately, science is the best tool we have for finding out about our reality, making cool stuff, and blowing things up. Without it we wouldn’t be, this article wouldn’t be possible, we wouldn’t know what a Bill Nye smack down looks like. Sure, there is room for improvement, especially in the peer review process and funding arrangements, and science is flawed because it is done by humans, but science is bringing the awesome every day: we have to remember that fact.

Other rebuttals:

*Wow, who’d have thought including Neil DeGrasse Tyson in this context would age quite so badly!?